Is Anthropic Pivoting Away from its Core Promise?

When Anthropic launched Claude in March 2023, the firm set itself apart from the many other artificial intelligence labs by promising that safety would be the first priority. While many of the other labs were working on developing more powerful and efficient artificial intelligences, Anthropic was working under the umbrella of trust.

In the announcement for the launch of Claude, the firm said that the artificial intelligence was an assistant that was intended to be helpful, honest, and harmless.

Even the name that the firm chose, taken from the Greek word for “human,” was intended to convey that the firm was working under the umbrella of humanistic development.

Anthropic Redefines Its Safety Commitment

Anthropic did not want the world to see them as another firm that was racing towards power and size. The firm wanted the world to see that it was possible for strong boundaries to lead the way.

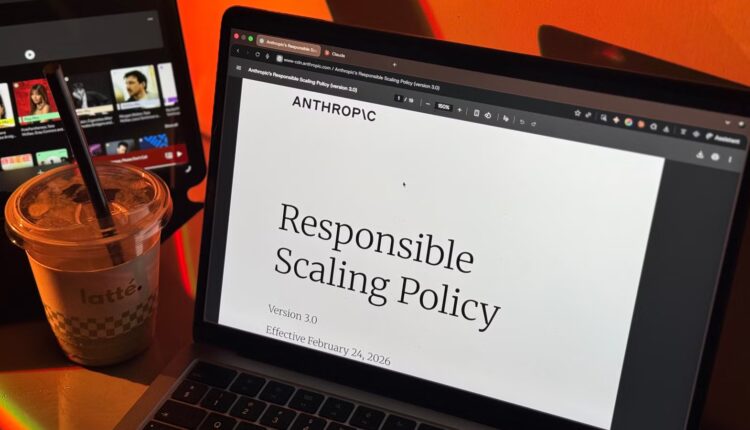

Later that year, the firm was able to make this promise a reality by developing the Responsible Scaling Policy, or RSP.

This was a set of rules that the firm was going to live by when it came to developing more powerful artificial intelligences, and the core of the policy was a promise that no other firm was making.

If the former’s potential ever increased beyond the latter’s capacity to ensure the system was safe, the latter promised to cease training and deploying new models until the former’s safety mechanisms were brought up to speed.

This week, Anthropic modified this policy and the promise that was the foundation of the latter’s identity as a safety-first AI model.

The new RSP replaces the previous policy with a new framework called the Frontier Safety Roadmap. It still describes the safety goals and risk checks, but the language has been altered to change the way this promise is stated. Instead of a hard trigger that stops development, the new policy focuses on transparency.

Anthropic commits to sharing the risks with the public and to explaining the plan to address the risk. The choice to continue development is left to the company itself.

This shift changes the policy from a promise of a commitment to a list of public goals. Anthropic is still committed to tracking and reporting their own progress but no longer commits to a pause if safety issues increase.

Balancing Competitive Pace with AI Safety

Anthropic believes this shift is a reflection of a larger reality. The danger of AI is not dependent on a single company. If a safe developer is holding back while others are moving forward without adequate safety measures in place, it is a situation where a pause could potentially lead to negative outcomes, not safe outcomes.

Anthropic still commits to a pause under their revised policy. They will consider a pause if they have a strong lead over their competitors or if there is substantial evidence of serious danger from advanced AI systems.

But the policy also makes a different promise when it comes to their competitors. If their competitors are moving forward without adequate safety measures in place, Anthropic is committing to keeping up with them instead of holding back.

The timing of the change has also sparked interest. On the same day that the updated RSP was issued by Anthropic, US Defense Secretary Pete Hegseth met with the CEO, Dario Amodei, and urged the company to ease its restrictions on its use in the military.

The message may have been well-timed. Anthropic has a $200 million contract with the Pentagon, and the loss of the deal could impact revenue and its partnerships related to the defense industry.

Anthropic’s RSP Update and the Shift Toward Self-Regulation

Anthropic has not suggested that the updated RSP is related to the meeting, but the timing is certainly suspect and does suggest the issue of outside pressure and incentives in the AI industry.

With this change, no major AI lab has a binding commitment to stop development if its safety capabilities fall behind its development capabilities.

The language regarding safety is common in the industry, but the hard stop requirements are no longer present. The discussion now is whether transparency and self-regulation will become sufficient as the capabilities of AI continue to advance.

Comments are closed.