Xinzhou Wu’s Vision for NVIDIA Autonomous Supremacy

On a crisp morning in early March 2026, a silver Mercedes CLA sedan navigated the winding, fog-draped curves of Highway 84, descending from the hills of Woodside toward the silicon-dense sprawl of San Francisco. In the passenger seat sat Jensen Huang, the leather-jacketed CEO of NVIDIA. Behind the wheel, with his hands hovering inches from the leather-wrapped rim, was Xinzhou Wu, NVIDIA’s Vice President of Automotive.

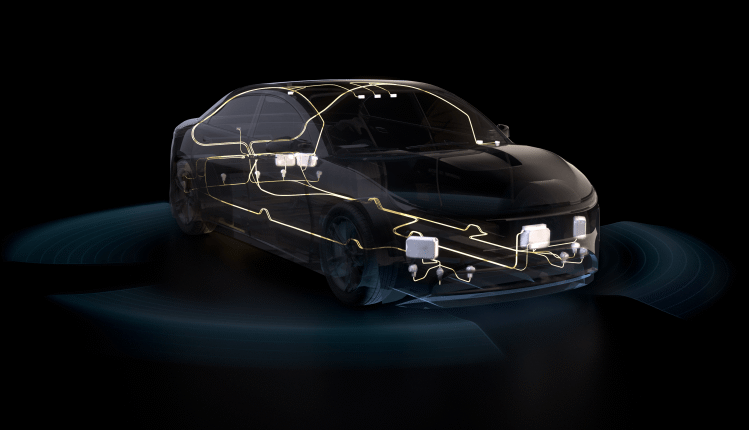

This wasn’t just a commute; it was a high-stakes demonstration of the MB.Drive Assist Pro, the autonomous brain NVIDIA has spent billions to perfect. In an exclusive deep-dive interview following the drive, Wu laid out the blueprint for how NVIDIA plans to win the self-driving war not by building the best car, but by building the best mind.

While Tesla continues its quest for a vertically integrated “Full Self-Driving” (FSD) monopoly and Waymo expands its expensive fleet of custom-built robotaxis, NVIDIA is taking a parallel path. Wu describes NVIDIA’s role as the “universal architecture” of the autonomous era.

“We aren’t in the business of owning fleets or managing car logistics,” Wu said. “Our goal is to provide the AI infrastructure that allows every other automaker from Mercedes and Volvo to the new wave of EV startups, to compete with Tesla on day one.”

By positioning itself as the “shovels” in the autonomous gold rush, NVIDIA avoids the capital-intensive nightmare of car manufacturing. Instead, they are licensing a full-stack solution: the DRIVE Thor chips, the foundation models for perception, and the cloud-based simulation tools required to validate them.

Generative AI: Moving Beyond “Hand-Coded” Driving

A central theme of Wu’s leadership since joining from XPeng has been the transition to End-to-End Generative AI. Traditional autonomous systems relied on millions of lines of “if-then” code written by human engineers. Wu argues that this approach has hit a ceiling.

“The world is too messy for hand-coded rules,” Wu explained. NVIDIA’s latest stack uses Vision-Language Models (VLMs) that allow the car to actually “understand” its surroundings. During the Woodside drive, when a construction worker used a hand gesture to wave the Mercedes through a temporary lane, the car didn’t just see an obstacle; it understood the intent. This “Common Sense AI” is powered by the same transformer architectures that drive the latest LLMs, but optimized for the millisecond-latency requirements of the road.

The Mercedes Partnership: A 2026 Milestone

The Mercedes CLA used in the demonstration represents the first fruits of a multi-year partnership. The MB.Drive Assist Pro is the industry’s first “Level 3-plus” system available in a mass-market sedan, capable of hands-free operation on highways and complex urban environments.

Wu noted that the partnership is a blueprint for the “Ultra” tier of automotive. “Mercedes brings the luxury and the safety heritage; we bring the 2nm silicon and the neural networks.” This collaboration allows NVIDIA to gather real-world data from millions of miles driven by consumers, which is then fed back into the training loop, a data fly-wheel that finally rivals Tesla’s fleet.

Omniverse: The Virtual Proving Ground

One of the most technical segments of the interview focused on NVIDIA Omniverse. Wu revealed that for every mile the Mercedes drove on the 101, it had already driven ten million miles in simulation.

“Simulation is no longer just for testing edge cases,” Wu said. “It is where the AI is born.” Using digital twins of cities like San Francisco, NVIDIA can simulate rare “black swan” events blinding rain, erratic pedestrians, or complex multi-car accidents without ever putting a human at risk. This virtual-first approach has allowed NVIDIA to compress five years of development into eighteen months.

The interview concluded with a look toward the future. While the MacBook Neo and the HomePad are defining the consumer’s home, Wu is focused on the 2027 Robotaxi launch. NVIDIA recently announced it would partner with a major mobility firm to test its own Level 4 robotaxi service, marking a departure from its “platform only” stance.

“Jensen often says there will eventually be a billion autonomous cars on the road,” Wu reflected. “Some will be owned, some will be shared, but we want the majority of them to be thinking with NVIDIA silicon.”

As the Mercedes pulled to a stop in downtown San Francisco, the car had completed the 30-mile journey without a single human intervention. For Xinzhou Wu, it wasn’t just a successful test drive; it was a proof of concept for a world where the car is no longer a machine, but a mobile AI companion.

Comments are closed.