The AI That Chooses to Be Good

For years, the AI industry has struggled with a fundamental question: how do we ensure machines behave responsibly? The common approach has been to build powerful systems first and worry about ethics later adding safety layers, moderation filters, or regulatory oversight once the technology is already deployed.

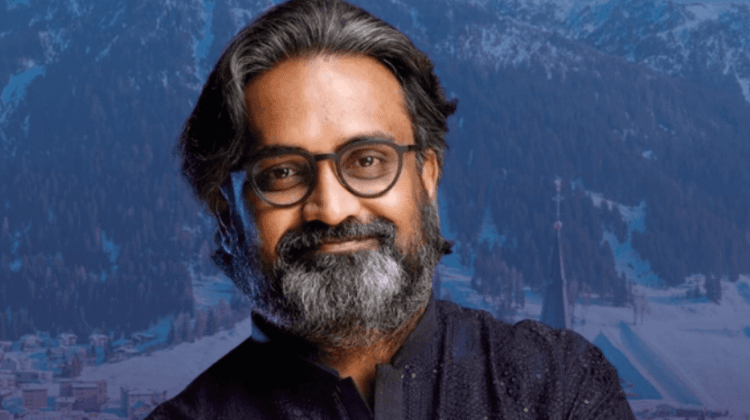

Natarajan believes this approach reveals a deeper flaw in how AI systems are designed.

“Every other approach to AI ethics is retroactive,” he says. “It is governance applied to a system that was already built without virtue. We are doing something categorically different: building the virtue first, and the intelligence second.”

Behind the philosophical name lies a technical architecture developed by Natarajan and his team at Orchestro.AI. The company has documented the framework through a growing patent portfolio more than seventy inventions spanning artificial intelligence systems, logistics optimization, and supply-chain infrastructure.

Most AI models today are built to predict, optimize, or generate. They are designed around measurable objectives such as maximizing engagement, minimizing cost, or predicting the next most likely word. When such systems cause harm, it is rarely because they intend to do so; it happens because the objective function lacks moral context.

Angelic Intelligence proposes a different computational foundation. Instead of a single monolithic model governed by external rules, the architecture is composed of 27 specialized agents known as Digital Angels. Each agent represents a virtue drawn from different cultural and philosophical traditions.

These agents are not symbolic personas or chatbot characters. They are structured computational modules with defined responsibilities, weights, and decision protocols that actively shape how the system evaluates choices.

- Karuna (Compassion) – evaluates the human impact of efficiency-driven decisions.

- Dikaiosyne (Justice) – assesses whether outcomes are fair across different groups and communities.

- Patience – slows decision cycles to prevent impulsive optimization.

- Anavah (Humility) – detects overconfidence and forces acknowledgment of uncertainty.

- Fortitudo (Courage) – allows the system to challenge consensus when ethical clarity demands dissent.

- Ahimsa (non-harm) – holds veto authority when predicted actions threaten human dignity.

When the AI system faces a decision whether routing a shipment, recommending a candidate for a job, or moderating content, these agents deliberate together. Their perspectives are weighed, contested, and resolved through a governance protocol that Natarajan describes as “the computational equivalent of a council of elders.”

The Angelic Intelligence framework is built on several core principles:

- Virtue as the foundation. Ethics must exist at the first layer of computation rather than being added afterward.

- Distributed moral authority. No single agent controls the outcome; decisions emerge from collective deliberation.

- Cultural plurality. The virtues embedded in the system draw from Eastern, Western, Abrahamic, and Indigenous wisdom traditions.

- Responsibility grows with capability. As the system becomes more powerful, its ethical oversight becomes stronger.

- Long-term accountability. Decisions are evaluated not only for immediate efficiency but also for their long-term societal consequences.

A Career Inside the Optimization Machine

Natarajan’s thinking about AI ethics did not originate in theory alone. Before founding Orchestro.AI, he spent more than twenty-five years working inside large-scale corporate systems.

During his career he helped develop optimization platforms across companies such as Walmart, The Walt Disney Company, Coca-Cola, PepsiCo, Target Corporation, and American Eagle Outfitters.

These roles gave him a front-row seat to how algorithms shape human systems.

“The supply chain is a perfect laboratory for AI ethics,” he explains. “Every efficiency gain has a human cost somewhere. Every algorithm that reduces delivery time may also increase pressure on workers or drivers.”

After decades spent improving these systems, Natarajan began asking a deeper question: what does “better” actually mean when algorithms affect millions of lives?

The answer, he says, came from a personal memory his mother standing outside a school office every day for an entire year to secure his education.

“No algorithm would recommend that strategy,” he recalls. “But it was the most powerful act of intelligence I have ever witnessed.”

A Structural Difference

Traditional AI safety methods often rely on training models using techniques such as reinforcement learning from human feedback or external safety rules. While these approaches can reduce harmful outputs, they do not fundamentally change the system’s underlying objective.

Angelic Intelligence attempts to address this problem at the architectural level. The Digital Angels participate directly in reasoning during runtime. They are not filters applied after decisions are made they shape the decisions themselves.

Natarajan often explains the concept using a construction analogy.

“You can build a house and then install fire sprinklers,” he says. “Or you can build the house out of stone. Sprinklers are governance. Stone is virtue-native architecture.”

Within this system, the Angels generate probabilistic assessments based on their respective virtues. When conflicts arise, the governance protocol requires explicit reasoning, which can be logged and audited. Certain virtues particularly Ahimsa (non-harm) and Dikaiosyne (justice) hold structural veto authority when decisions cross defined dignity thresholds.

From Philosophy to Global Conversation

The concept of virtue-native AI has increasingly moved from theory into global policy discussions.

At the World Economic Forum Annual Meeting 2026Natarajan presented the Angelic Intelligence framework across several international forums involving technology leaders, policymakers, and investors.

Recognition has also come from institutions including Forbes Middle East and the UK Houses of Parliamentwhere his work in artificial intelligence received formal acknowledgment.

According to Natarajan, the shift in reception has been striking.

“Two years ago, people heard ‘virtue-native AI’ and thought it was philosophical,” he says. “Now they ask for the patent numbers.”

The Discipline Behind the Vision

Despite his role in global AI discussions, Natarajan maintains a personal discipline that many observers find unexpected. Every morning before sunrise, he practices classical Indian painting.

The tradition emphasizes adherence to form, patience, and disciplined execution principles that he believes mirror the structure of Angelic Intelligence.

“In classical painting, the forms are given,” he explains. “The creativity lies in the discipline of execution. Intelligence emerges within those constraints.”

Disclaimer: IBT Does Not Endorse The Above Content.

Comments are closed.