Apple’s Camera-Equipped AirPods Reach Final Testing Phase

In a bold move to redefine the “wearable AI” market, Apple has reportedly advanced its camera-equipped AirPods to a late-stage development milestone. As of May 8, 2026, reports indicate that the tech giant has moved these experimental earbuds into Design Validation Testing (DVT). This is the final major design checkpoint before mass production, signaling that the hardware is almost ready for the public. However, while the physical earbuds are nearing completion, a significant bottleneck has emerged: the revamped, AI-powered Siri is not yet capable of handling the “visual intelligence” these earbuds require, likely pushing the launch into the final quarter of 2026.

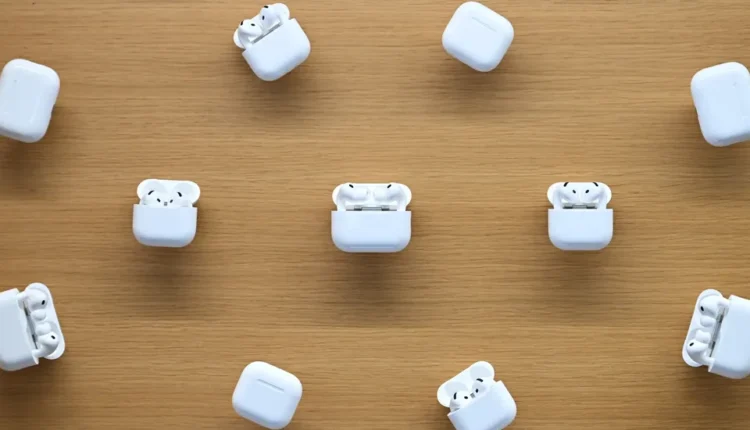

Unlike a standard camera meant for capturing memories, the sensors built into these new AirPods (informally dubbed “AirPods Ultra” or “AirPods Pro 3 with Cameras”) are designed to act as high-tech “eyes” for a user’s digital assistant.

Form Factor: The prototypes resemble the current AirPods Pro 3 but feature slightly longer stems to house the low-resolution optical sensors.

Dual-Lens Integration: Cameras are reportedly embedded in both the left and right earbuds to provide a broader field of view, allowing the AI to triangulate and identify objects more accurately.

The LED Indicator: To mitigate the massive privacy concerns associated with “hidden” cameras, Apple has integrated a small LED light on the stems. This light will illuminate whenever visual data is being processed or sent to the cloud, serving as a public signal that the device is “looking.”

The Use Case: Visual Intelligence and Context

The primary goal of this hardware is to bridge the gap between the digital and physical worlds without requiring a bulky headset like the Vision Pro. By feeding low-resolution visual data to Siri, the AirPods enable a new class of contextual AI features:

Culinary Assistance: A user could glance at the ingredients on their kitchen counter and ask, “Siri, what can I cook with this?” The AI would analyze the food items and suggest a recipe in real-time.

Advanced Navigation: Instead of saying “turn left in 200 feet,” Siri could use the cameras to identify landmarks, telling the user to “turn left just past the blue coffee shop.”

Visual Reminders: The cameras could trigger reminders based on what they see. If you walk past a specific brand of detergent you previously added to a shopping list, Siri could chime in to remind you to pick it up.

The Siri Stumbling Block

Despite the hardware reaching the DVT phase, the “intelligence” part of Apple Intelligence is currently the weak link. Apple originally aimed for a first-half 2026 launch, but the timeline was derailed by the slow development of the new, revamped Siri.

The upgraded assistant which now reportedly utilizes Google’s Gemini models under the hood for more complex reasoning is currently scheduled for a September 2026 release alongside iOS 27. Internal sources suggest that if Siri’s “visual reasoning” remains inconsistent or slow, Apple leadership may hold the AirPods back even further to avoid a repeat of the “Hype-First” marketing backlash that led to a $250 million settlement earlier this year.

The Market Strategy: AirPods vs. Smart Glasses

Apple’s decision to put cameras in AirPods rather than launching smart glasses first is a calculated one. AirPods are already a ubiquitous, culturally accepted product with hundreds of millions of users. Convincing a user to buy “smarter” earbuds is a much lower hurdle than convincing them to wear a “face computer” or a camera-equipped pendant.

By hiding the AI inside a familiar form factor, Apple is effectively creating a “stealth” wearable. However, the project sits alongside a broader suite of AI hardware in development, including smart glasses and an AI pendant, which are expected to follow in 2027.

As of May 2026, the tech world’s eyes are on September. If the Siri overhaul succeeds in integrating visual intelligence seamlessly, these camera-equipped AirPods could represent the most significant leap in wearable tech since the original Apple Watch.

But the “digital arteries” of Apple’s ecosystem are currently strained. The company must prove it can balance the intense processing power required for real-time video analysis with the limited battery life of a tiny earbud, all while ensuring that “Siri with eyes” doesn’t become a privacy nightmare. For now, the hardware is ready to see; the question is whether the software is ready to understand.

Comments are closed.