Critical Security Problems in AI Development

The leak of internal source code from Claude Code, an advanced AI coding assistant developed by Anthropic. It will create a major distribution in the rapidly expanding field of artificial intelligence. After the Claude Code leakthe company immediately responded but the incident has already raised serious questions about security concerns and trust in the AI industry. The development of advanced AI systems requires organizations to implement more effective security measures.

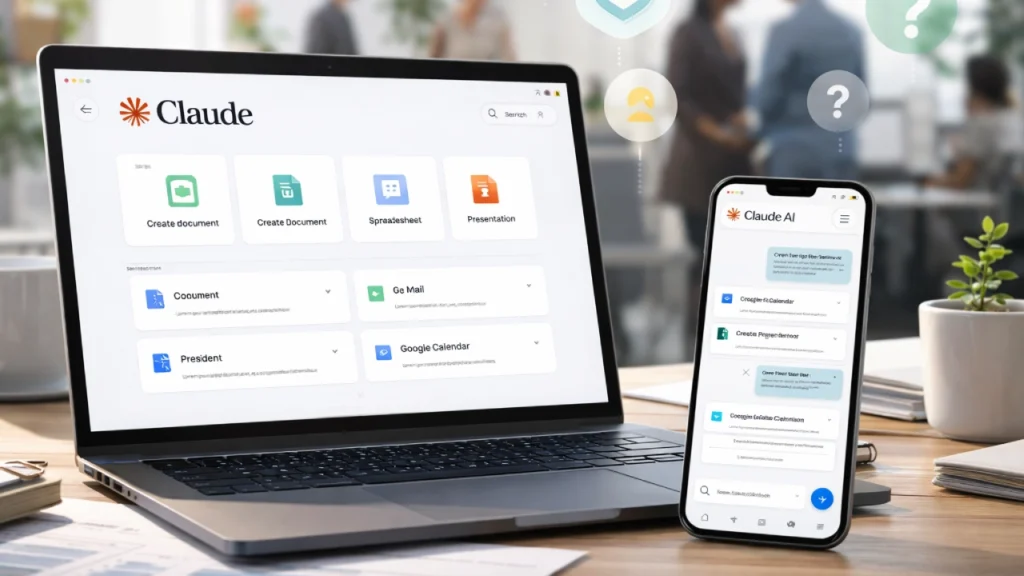

What is Claude Code

Claude Code is a functional component of Anthropic’s advanced artificial intelligence tools which enable efficient data using and increase efficiency in work. The program enables users to create software applications through simple commands by performing code writing, error correction, and complex programming explanation tasks. It also functions as an advanced coding assistant because its system operates as an intelligent agent.

This system enables users to complete tasks through multiple steps while it understands the context of their work and acts as a human. The ability of Claude Code to perform various tasks raises its value to users while making it highly sensitive to security threats.

What Was The Actual Claude Code Leak

Reports indicate that unauthorized online sharing occurred for certain parts of Claude Code’s internal source code. This may include system architecture details and internal system functioning and the AI Code generation and writing methods which the AI uses to develop it’s outputs. Anthropic started a major operation to eliminate all of the leaked information from its system.

The company reportedly issued more than 8,000 takedown requests across websites and platforms which hosted the content. Online publication creates permanent Digital content which becomes impossible to delete completely. The process of containing digital content becomes difficult because copies spread rapidly.

Why This Leak Is A Big Deal

This constitutes more than a basic data breach because it involves valuable intellectual property assets. AI Systems like Claude Code are built using years of research testing and large investments. The internal code of a company represents it’s most valuable asset. Public access to this information will result in severe business repercussions.

Competitors can discover system operation details which they will use to enhance their product offerings. The system allows malicious Actors to identify weaknesses which they can use for harmful purposes . The AI competition experiences balance changes from minimal information leaks.

The Growing Security Problem in AI

AI development has achieved rapid advancement beyond the existing capacity of security systems to safeguard its progress. Companies need to develop their products with new features which enhance performance while maintaining a competitive edge against other companies. The company needs to implement effective internal security systems which require more time than their existing implementation.

AI systems require more complex security measures than standard software. The system requires protection for its complete structure because it operates with extensive datasets and multiple training pipelines and several model layers. Leaked information and Cyber Threats increase because AI systems develop more powerful capabilities.

The ongoing discussion about AI transparency versus security protection has been intensified by the recent information breach. On one hand, transparency can help researchers and developers understand AI systems better. The process leads to three benefits which include safety improvement and trust development and collaboration enhancement.

Industry-Wide Impact

The incident affects more than just Anthropic. The process will establish stronger regulations which will enhance monitoring systems while enforcing tighter controls to safeguard critical information. As AI technology becomes essential to their operations, organizations will allocate additional resources toward cybersecurity initiatives. The approach will enhance AI development security through more secure systems which enable organizations will allocate additional resources toward cybersecurity initiatives.

What is Meant For Users

Developers and regular users will experience limited effects from the development. The current operation of Claude Code’s shows no proof that user data has been affected. The incident will change how users approach AI tools. The importance of security and privacy and trust issues will increase for users.

Final Thoughts

Security matters because the quest for smarter AI systems cannot skip over this crucial element. The development of powerful tools creates the need to address equally dangerous risks. The rest of the AI industry faces this particular situation. The situation serves as a assessment for Anthropic because it demonstrates its capability to handle situations and Restore operations.

Comments are closed.