GitHub Copilot Controversy: Shocking AI Issues Exposed

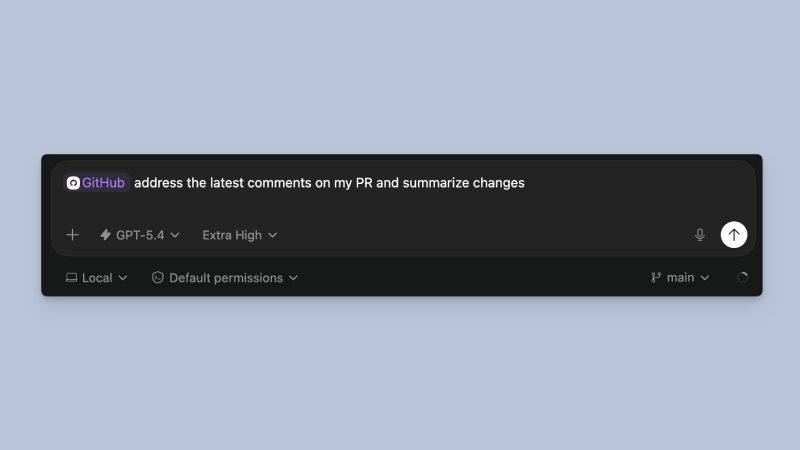

Amidst the GitHub Copilot Controversy, it has one of the most widely used AI coding assistants. Developers had discovered insertion of unexpected messages into pull requests which are usually used for reviewing and management of code changes. These messages appeared to be suggestions as many developers felt it was advertising and was not appropriate for professional coding .

This led to GitHub facing huge backlash as users were now questioning if AI tools should be allowed to modify their work without taking permission. Developers communities paid a lot of attention to this issue which held growing concerns regarding AI tools and their Usage in a regular work environment.

What Actually Happened

The Controversy had initially started as Developers had noticed Copilot was using automated texts to pull requests. The additions were not related to the code and even appeared as suggestions. Pull requests are clean and focused solely on code changes thus extra content creates confusion and other problems for developers.

The usage of tools like Copilot is to provide assistance to the developers , not adding content on their behalf. These messages had appeared without any clear user control, thus many developers found this to be unnecessary and unwanted.

Reaction Of The Developer

The reaction from developers was huge and mainly focused on negatives. Many felt that this issue had disrupted their workflow and was a huge issue for many such users . The main raised concerns are :

- Pull requests shall remain simple and focused on code changes

- AI tools should not be adding automated content

- Paid tools shall not have anything related to ads

On platforms like Reddit and other, developers had raised their concerns where the issues gained attention. Several users did not have a problem with just the additional messages themselves but complained regarding the lack of control.

GitHub’s Response

Soon after the issue gained Public attention, GitHub responded shortly after. The company immediately took action and rolled out the feature and removed messages from pull requests. Microsoft also clarified that this was not meant to be for advertising and was a bug or any unintended feature.

Thus, this response helped to calm the backlash and anger of the developers even though many still remained cautious. The incident raised several controversies and people had started to question how such a feature was introduced in the first place.

Why This Matters

This issue might seem small from afar but in software development even minor changes like this can cause huge problems leaving a big impact. The pull requests feature is a key part of the developmental process. It is used for reviewing code, discussing changes and maintaining quality. Any unexpected content in this space can be :

- Distracting for developers

- Reduce clarity

- Slow down collaboration

Thus, developers expect complete Control over the messages appearing in the pull requests feature.

The Growing Concern : AI Over Reach

Thus, this issue highlights a major issue which is the Role of AI usage In a professional work environment. As AI systems get more powerful over time they have started to do more than just assist users. It is seen to be taking actions on their own which can create many problems if it’s not managed properly.

This raises few important questions regarding

- AI acting without user permission

- Amount of control user has

- Limits that are to be set

Building and keeping trust is a very important factor for many developers. The Trust can be heavily impacted if AI tools start to act out in unexpected ways .

Impact On AI Tools And Developers

As GitHub Copilot remains a popular And well known tool, this issue is most likely to be overlooked and reduce its importance. But users will still remain to pay close attention to how these AI tools will behave. Developers prefer using tools which can be predicted, helps them and are easy to control.

Thus, any feature which disrupts them can feel intrusive which makes them give negative reactions. The quick rollback also shows us how companies are willing to make necessary changes on the basis of users feedback .

Final Thoughts On The GitHub Copilot Controversy

This controversy shows users that even well-known and popular tools shall be carefully designed and monitored. The feature might not have been intentional but it shows how sensitive users are regarding any uncalled changes in their workflow. Developers who are relying on clarity and control in work environments thus AI tools are expected to support the process and not disrupt it.

Comments are closed.